Global Deep Learning Chips Market - Key Trends & Drivers Summarized

Why Are AI Workloads Reshaping The Architecture Of Silicon Compute Platforms?

The rapid shift from conventional software based analytics toward model centric computing has fundamentally changed how semiconductors are designed, leading to a new generation of deep learning chips optimized for tensor operations, matrix multiplication throughput, and memory bandwidth rather than general purpose instruction execution. Modern workloads such as large language models, multimodal generative models, and real time inference pipelines demand massively parallel arithmetic units capable of processing trillions of parameters, pushing chipmakers to adopt specialized accelerators including GPUs, TPUs, NPUs, and domain specific AI ASICs. A key architectural transition involves the replacement of clock speed scaling with parallel compute density scaling, resulting in chip floorplans dominated by tensor cores, systolic arrays, and on chip SRAM pools to reduce latency caused by external DRAM access. Memory hierarchy redesign has emerged as a decisive factor, with high bandwidth memory stacks, chiplet based interconnect fabrics, and advanced packaging technologies enabling sustained throughput required by transformer based models. Training clusters increasingly rely on high speed interconnect standards that enable distributed gradient synchronization across thousands of accelerators, which in turn drives demand for chips with integrated networking engines and collective communication offload capability. Power efficiency is becoming a design constraint equal in importance to performance due to data center energy budgets, resulting in near threshold voltage optimization, sparsity acceleration engines, and mixed precision arithmetic such as FP8 and INT4 inference pathways. Edge computing requirements have created a separate class of low power deep learning chips capable of performing real time analytics locally in cameras, industrial machines, and consumer electronics, avoiding latency and bandwidth constraints of cloud processing. Automotive perception systems, robotics navigation modules, and augmented reality devices rely on deterministic latency performance that traditional processors cannot deliver, further expanding specialized chip adoption. Semiconductor vendors now design software stacks alongside hardware, integrating compilers, model optimizers, and runtime orchestration tools so that neural networks can map efficiently onto hardware primitives. This co design approach ensures that future neural architectures influence silicon layout decisions, reversing the historical relationship between software and hardware development.Is Generative AI Accelerating A New Era Of Hyperscale Data Center Hardware Investment?

The explosive growth of generative AI services has transformed hyperscale infrastructure planning from storage centric expansion to accelerator centric expansion, with data centers now sized according to compute density rather than rack count alone. Training frontier models requires clusters containing tens of thousands of deep learning chips interconnected through high bandwidth fabrics, creating a new procurement cycle in which compute accelerators become the primary capital expenditure item. Cloud providers increasingly differentiate service offerings based on available AI compute capacity, which encourages continuous hardware refresh cycles as new chip generations deliver large gains in tokens per second and energy efficiency. Rack level liquid cooling, immersion cooling systems, and advanced thermal management architectures are being deployed to support the heat flux generated by dense accelerator arrays. Deep learning chips are also influencing storage architectures because training datasets require petabyte scale throughput, leading to the development of storage class memory tiers positioned closer to accelerators. Inference serving infrastructure is evolving toward disaggregated compute pools in which accelerators are dynamically allocated to user workloads, requiring chips capable of virtualization and multi-tenant isolation. Telecommunications operators deploy AI inference at network edges to manage traffic routing, anomaly detection, and autonomous network optimization, creating a distributed demand pattern beyond centralized hyperscale facilities. Financial trading firms and scientific research institutions increasingly invest in dedicated AI clusters to accelerate quantitative modeling and simulation workflows. The rapid cadence of model evolution forces compatibility between hardware and frameworks, encouraging standardized accelerator interfaces and portable execution layers that prevent vendor lock in concerns. Semiconductor foundries experience sustained wafer demand for advanced process nodes because AI accelerators consume larger die area and higher transistor counts than traditional CPUs. This structural change links semiconductor capacity planning directly to artificial intelligence adoption rates rather than consumer electronics shipment cycles.How Are Edge Devices Turning Into Intelligent Autonomous Systems Through Embedded AI Silicon?

Deep learning chips designed for embedded environments are enabling a transition from connected sensing devices to independent decision making systems capable of interpreting real world signals without cloud assistance. Smart cameras perform object detection and behavior recognition locally, reducing surveillance bandwidth and enabling privacy preserving analytics. Autonomous vehicles rely on heterogeneous computing modules combining vision accelerators, radar processing units, and neural network inference engines that process sensor fusion data in milliseconds. Consumer electronics manufacturers integrate neural processing units into smartphones and wearables to support on device language translation, image enhancement, and contextual awareness functions that must operate offline. Industrial automation platforms deploy machine vision inspection powered by dedicated inference chips that maintain deterministic latency required in production lines. Healthcare monitoring equipment analyzes physiological signals in real time to detect anomalies before data transmission, reducing response time in critical scenarios. Agricultural robotics uses embedded deep learning silicon to navigate fields and identify crop conditions, supporting precision farming workflows without connectivity dependence. Retail environments implement shelf analytics and customer behavior mapping through local inference to minimize network traffic. Robotics platforms in warehouses employ neural inference modules to coordinate movement and object handling autonomously. Power constrained edge chips utilize quantization aware execution and sparse matrix acceleration to achieve high throughput within limited thermal envelopes. Semiconductor designers increasingly produce scalable product families where a shared architecture spans cloud training accelerators and miniature embedded inference processors, simplifying software portability across deployment environments. This continuum from cloud to edge reinforces demand for flexible neural compute hardware capable of handling diverse model sizes and performance constraints.What Forces Are Fueling the Rapid Expansion of Deep Learning Chip Adoption Across Industries?

The growth in the deep learning chips market is driven by several factors including the expansion of generative AI services that require large scale model training clusters, the deployment of real time inference in automotive driver assistance systems and autonomous robotics, and the increasing integration of neural processing units in smartphones and consumer electronics to enable on device AI features. Rising adoption of AI based diagnostic imaging and clinical decision systems creates demand for hospital edge inference hardware capable of deterministic processing latency. Telecom operators implementing self-optimizing networks deploy distributed inference accelerators across base stations and core networks. Financial institutions execute fraud detection and risk modeling workloads that require low latency inference hardware colocated with transaction processing systems. Retail and e commerce platforms implement recommendation engines and personalization pipelines that rely on continuous model retraining infrastructure within cloud environments. Industrial manufacturers deploy predictive maintenance analytics powered by embedded inference chips connected to sensors on machinery. Growth of video analytics in smart city infrastructure requires dedicated neural processors capable of handling multiple high resolution streams simultaneously. The rapid increase in parameter sizes of foundation models necessitates high bandwidth memory architectures and multi-chip scaling technologies, which stimulates demand for advanced packaging and chiplet based accelerator modules. Government investments in domestic semiconductor manufacturing capacity to support national AI strategies further reinforce procurement of specialized AI accelerators. Expansion of edge computing deployments across logistics, healthcare monitoring and surveillance applications drives adoption of low power inference silicon. Continuous improvements in AI frameworks optimized for hardware acceleration encourage enterprises to migrate workloads from CPUs to dedicated deep learning processors, creating sustained demand across both training and inference segments.Report Scope

The report analyzes the Deep Learning Chips market, presented in terms of market value (US$). The analysis covers the key segments and geographic regions outlined below:- Segments: Chip Type (GPU Chip Type, ASIC Chip Type, FPGA Chip Type, CPU Chip Type, Other Chip Types); Technology (System-on-Chip Technology, System-in-Package Technology, Multi-Chip Module Technology, Other Technologies); Application (Media & Advertising Application, BFSI Application, IT & Telecom Application, Retail Application, Healthcare Application, Automotive Application, Other Applications)

- Geographic Regions/Countries: World; USA; Canada; Japan; China; Europe; France; Germany; Italy; UK; Rest of Europe; Asia-Pacific; Rest of World.

Key Insights:

- Market Growth: Understand the significant growth trajectory of the GPU Chip Type segment, which is expected to reach US$68.6 Billion by 2032 with a CAGR of a 39.8%. The ASIC Chip Type segment is also set to grow at 39.6% CAGR over the analysis period.

- Regional Analysis: Gain insights into the U.S. market, valued at $6.0 Billion in 2025, and China, forecasted to grow at an impressive 34.5% CAGR to reach $28.0 Billion by 2032. Discover growth trends in other key regions, including Japan, Canada, Germany, and the Asia-Pacific.

Why You Should Buy This Report:

- Detailed Market Analysis: Access a thorough analysis of the Global Deep Learning Chips Market, covering all major geographic regions and market segments.

- Competitive Insights: Get an overview of the competitive landscape, including the market presence of major players across different geographies.

- Future Trends and Drivers: Understand the key trends and drivers shaping the future of the Global Deep Learning Chips Market.

- Actionable Insights: Benefit from actionable insights that can help you identify new revenue opportunities and make strategic business decisions.

Key Questions Answered:

- How is the Global Deep Learning Chips Market expected to evolve by 2032?

- What are the main drivers and restraints affecting the market?

- Which market segments will grow the most over the forecast period?

- How will market shares for different regions and segments change by 2032?

- Who are the leading players in the market, and what are their prospects?

Report Features:

- Comprehensive Market Data: Independent analysis of annual sales and market forecasts in US$ Million from 2025 to 2032.

- In-Depth Regional Analysis: Detailed insights into key markets, including the U.S., China, Japan, Canada, Europe, Asia-Pacific, Latin America, Middle East, and Africa.

- Company Profiles: Coverage of players such as Achronix Semiconductor Corporation, Advanced Micro Devices, Inc., Alphabet, Inc., Amazon.com, Inc., Baidu, Inc. and more.

- Complimentary Updates: Receive free report updates for one year to keep you informed of the latest market developments.

Some of the companies featured in this Deep Learning Chips market report include:

- Achronix Semiconductor Corporation

- Advanced Micro Devices, Inc.

- Alphabet, Inc.

- Amazon.com, Inc.

- Baidu, Inc.

- BitMain Technologies Holding Company

- Cambrian Technologies

- Cerebras Systems

- Fujitsu Ltd.

- Graphcore Limited

Domain Expert Insights

This market report incorporates insights from domain experts across enterprise, industry, academia, and government sectors. These insights are consolidated from multilingual multimedia sources, including text, voice, and image-based content, to provide comprehensive market intelligence and strategic perspectives. As part of this research study, the publisher tracks and analyzes insights from 43 domain experts. Clients may request access to the network of experts monitored for this report, along with the online expert insights tracker.Companies Mentioned (Partial List)

A selection of companies mentioned in this report includes, but is not limited to:

- Achronix Semiconductor Corporation

- Advanced Micro Devices, Inc.

- Alphabet, Inc.

- Amazon.com, Inc.

- Baidu, Inc.

- BitMain Technologies Holding Company

- Cambrian Technologies

- Cerebras Systems

- Fujitsu Ltd.

- Graphcore Limited

Table Information

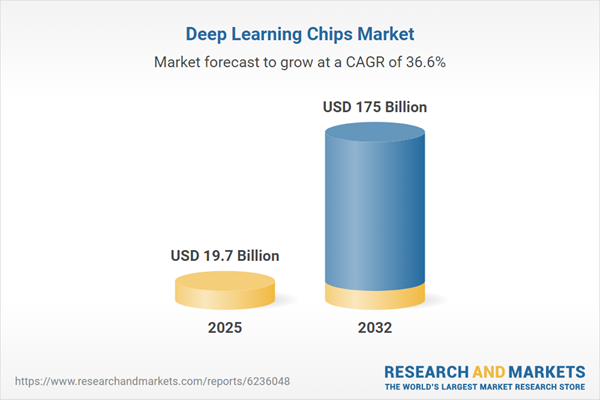

| Report Attribute | Details |

|---|---|

| No. of Pages | 188 |

| Published | May 2026 |

| Forecast Period | 2025 - 2032 |

| Estimated Market Value ( USD | $ 19.7 Billion |

| Forecasted Market Value ( USD | $ 175 Billion |

| Compound Annual Growth Rate | 36.6% |

| Regions Covered | Global |